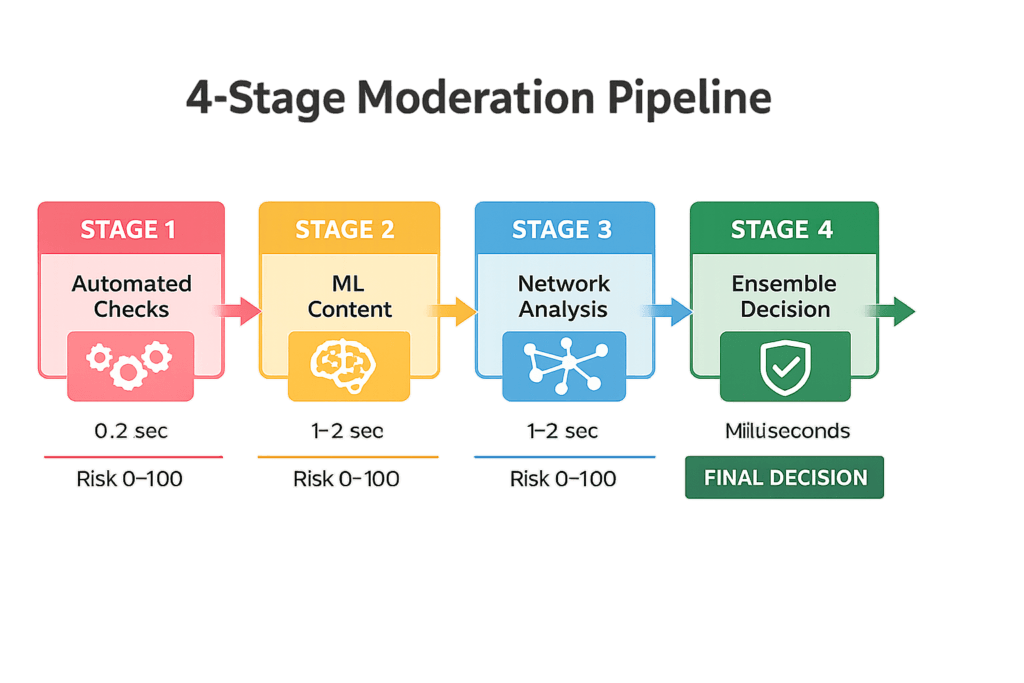

You hit “submit” on your restaurant review. 0.2 seconds later, 47 automated checks fire simultaneously. Your email domain is verified. Your device fingerprint is cross-referenced with 50 million other users. Your writing style is analyzed by a neural network trained on 2 billion reviews. Within milliseconds, a risk score appears: 42/100. Your review enters a queue. A human moderator will see it in 24 hours.

This is happening right now, everywhere.

When you post a review on Trustpilot, Google Reviews, or Amazon, your submission doesn’t immediately appear. Behind the scenes, sophisticated systems—combining machine learning, network analysis, behavioral forensics, and graph-based fraud detection—evaluate whether you’re a real person or a bot.

The stakes are enormous. Platforms face an existential problem: fake reviews destroy everything they’ve built. Consider the numbers:

• A restaurant receives a genuine 1-star complaint → competitors respond with 30 fake negative reviews from a bot network

• A business hires a review factory to post 500 fake 5-star reviews → platform’s trust signals become useless

• A travel site gets flooded with review ring activity → Google can’t trust its search results

• A marketplace loses authenticity → sellers leave, buyers distrust prices, the platform dies

The cost of getting this wrong is existential, not just financial. Yelp’s entire value proposition depends on trust. Amazon’s competitive advantage lives in review authenticity. Google’s search dominance relies on rating signals being genuine. TripAdvisor’s business model evaporates if fake reviews go undetected.

So platforms built defense systems. Expensive, sophisticated defense systems. And if you’ve ever had a genuine review held for manual review, or flagged as suspicious, you’ve bumped into one of these systems.

This guide explains how they work. Not to help you game the system—but to help you understand why your authentic review might get held, why platforms are conservative with new accounts, and how to build genuine credibility that platforms reward. Whether you’re a business managing reputation, a reviewer frustrated by false flags, or someone building a review system yourself, this is the technical deep-dive you need.

This is happening right now, everywhere.

When you post a review on Trustpilot, Google Reviews, or Amazon, your submission doesn’t immediately appear. Behind the scenes, sophisticated systems—combining machine learning, network analysis, behavioral forensics, and graph-based fraud detection—evaluate whether you’re a real person or a bot.

The stakes are enormous. Platforms face an existential problem: fake reviews destroy everything they’ve built. Consider the numbers:

• A restaurant receives a genuine 1-star complaint → competitors respond with 30 fake negative reviews from a bot network

• A business hires a review factory to post 500 fake 5-star reviews → platform’s trust signals become useless

• A travel site gets flooded with review ring activity → Google can’t trust its search results

• A marketplace loses authenticity → sellers leave, buyers distrust prices, the platform dies

The cost of getting this wrong is existential, not just financial. Yelp’s entire value proposition depends on trust. Amazon’s competitive advantage lives in review authenticity. Google’s search dominance relies on rating signals being genuine. TripAdvisor’s business model evaporates if fake reviews go undetected.

So platforms built defense systems. Expensive, sophisticated defense systems. And if you’ve ever had a genuine review held for manual review, or flagged as suspicious, you’ve bumped into one of these systems.

This guide explains how they work. Not to help you game the system—but to help you understand why your authentic review might get held, why platforms are conservative with new accounts, and how to build genuine credibility that platforms reward. Whether you’re a business managing reputation, a reviewer frustrated by false flags, or someone building a review system yourself, this is the technical deep-dive you need.

SECTION 1: HOW PLATFORMS ASSESS REVIEW AUTHENTICITY

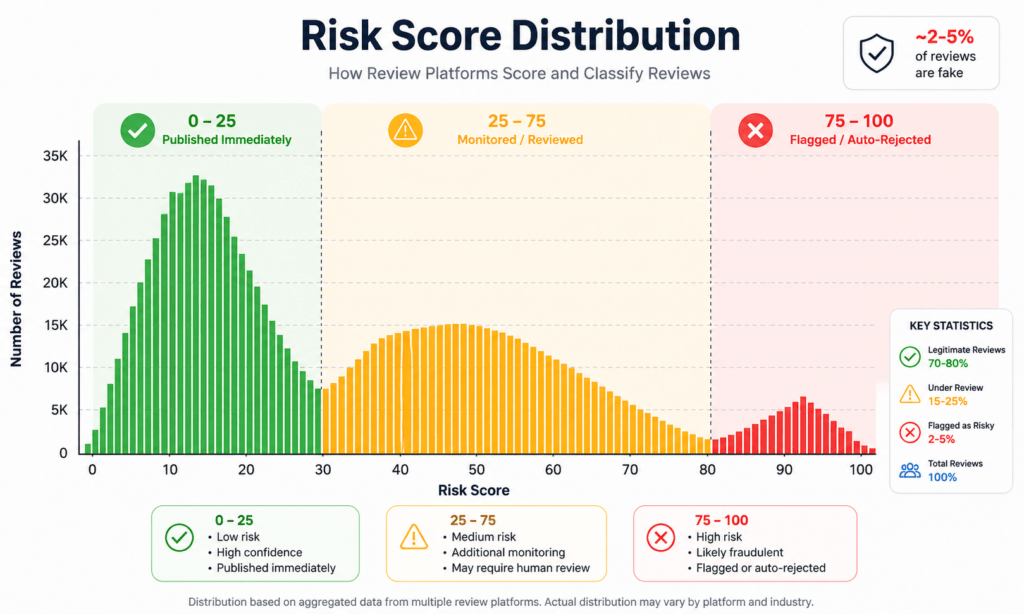

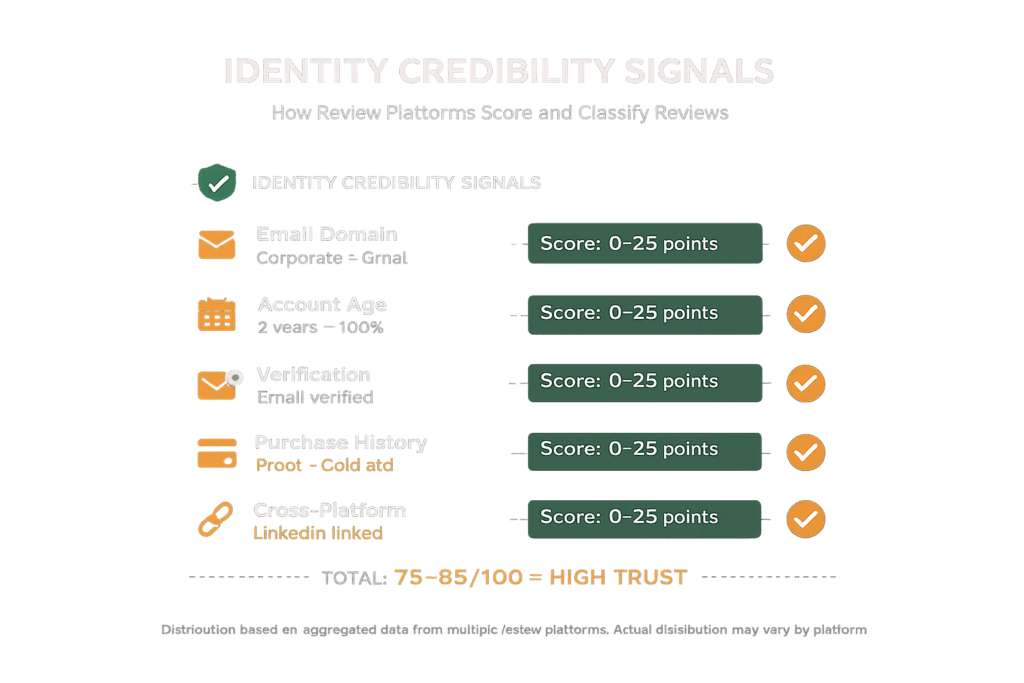

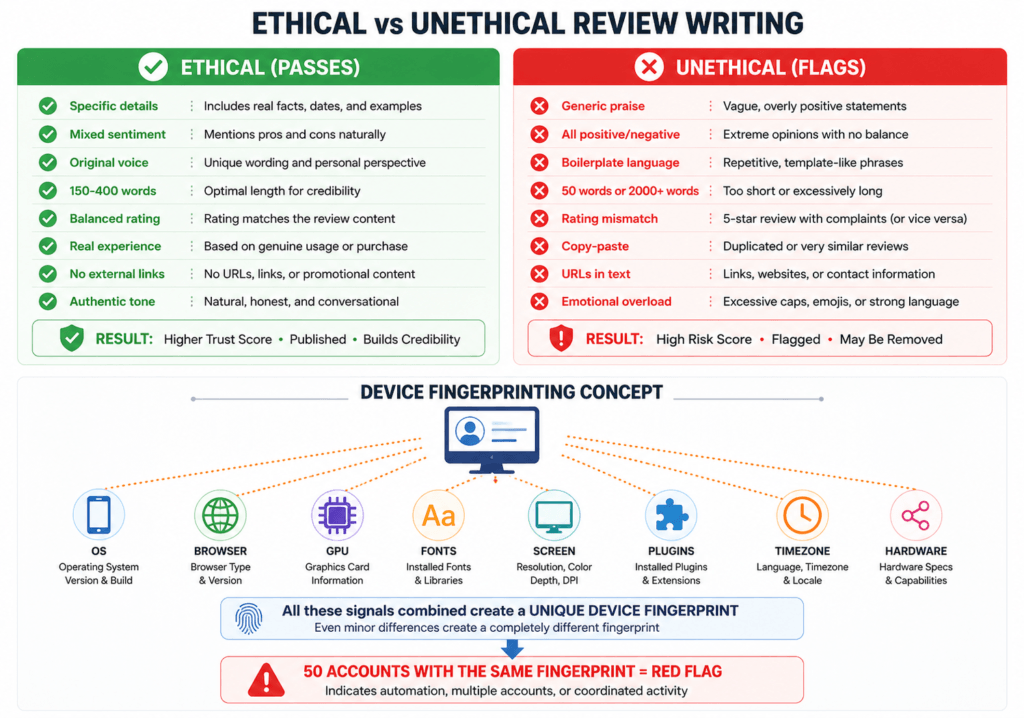

Identity Signals: Account Credibility Scoring Platforms build a "credibility profile" using multiple signals: • Email domain: Corporate email (john@microsoft.com) scores higher than Gmail• Account age: 5-year-old account = 0.9/1.0 score. 7-day account = 0.2. New accounts are riskier.• Email verification: Verified email is a basic trust signal• Purchase history: Proof of purchase is the gold standard• Cross-platform presence: LinkedIn/Facebook links increase credibility ✓ High-Trust Identity Markers2-year-old account with verified corporate email, linked LinkedIn, and 50 prior reviews = base identity score of ~75-85/100. Device & Network Fingerprinting Platforms create digital fingerprints without installing software: Device Fingerprinting:├─ User-Agent (OS, browser, version)├─ Canvas/WebGL fingerprint (GPU rendering)├─ Screen resolution & color depth├─ Hardware concurrency (CPU cores)└─ TLS cipher suites (network layer) Why? Fraud rings are mechanical. If 50 accounts post reviews from identical device fingerprint in 24 hours, that's not 50 humans—that's one botnet. They also catch impossible travel: Review from Singapore IP at 2:00 PM, London IP review at 2:15 PM = physically impossible (12+ hour flight). System flags as bot network. Behavioral Pattern Analysis Humans are unpredictable. Bots are mechanical. Platforms exploit this: • Review timing: Real reviews scatter over time. Fake rings post in clusters (e.g., all 2-4 AM, suggesting scheduled job)• Session patterns: Genuine reviewers engage (scroll, read other reviews, upload photos). Copy-pasters don't.• Account velocity: Real accounts: 1-3 reviews/month. Bots: 50 reviews in 48 hours = immediate investigation• Rating distribution: Honest reviewers: mix of ratings. Fake reviewers: all 5-stars or all 1-starsSECTION 2: HOW AI MODERATION SYSTEMS WORK

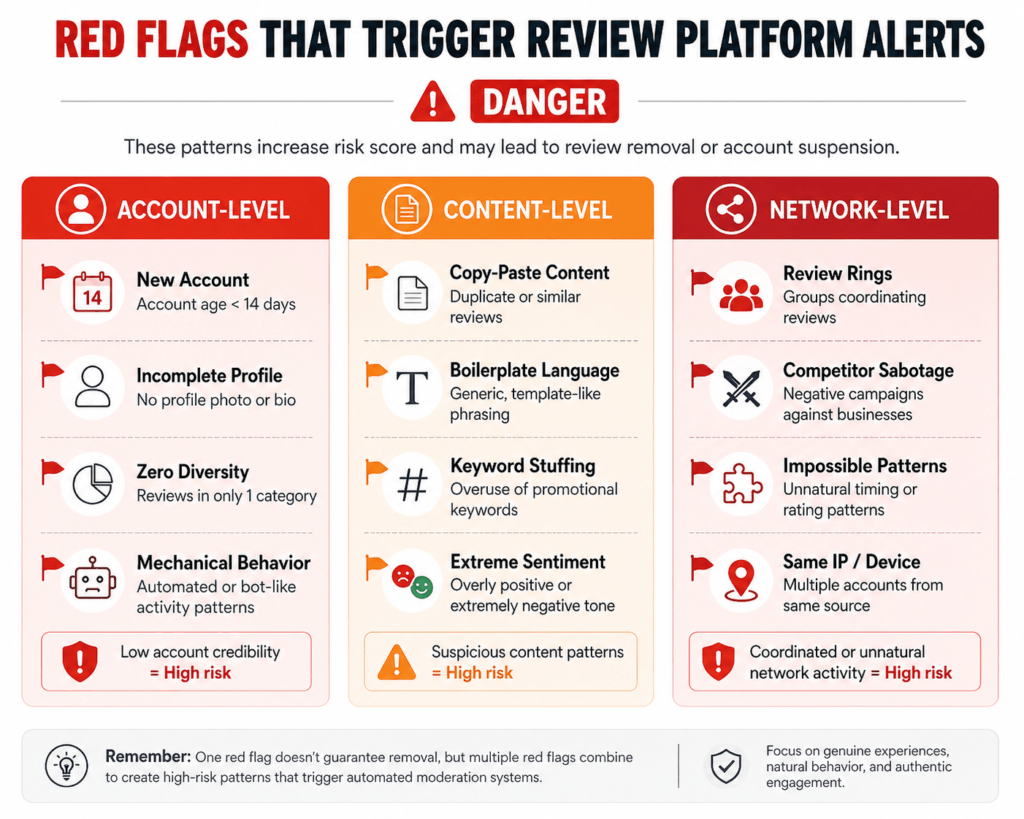

Modern platforms use stacked ensemble models—multiple AI systems voting on authenticity. Here's how they stack: The Four-Stage Moderation Pipeline Stage 1: Automated Ingestion Checks (milliseconds)Regex filters + keyword matching. Spam keywords (viagra, casino, online poker), known phishing domains, phone numbers in review text.Business rule engine: "Account age < 7 days" = +15 risk points. "Same IP as business owner" = +30 risk points.Output: Risk score 0-100. If < 20, publish immediately. If 20-50, proceed to Stage 2. If > 50, flag for human review. Stage 2: ML-Based Content Classifier (seconds)NLP + deep learning. The system vectorizes your review text using BERT or RoBERTa (transformer models trained on billions of reviews).Features extracted:- Readability metrics (Flesch-Kincaid grade level)- Sentiment distribution (how positive/negative)- Named entity extraction (are you mentioning real product names?)- Semantic similarity (is your text identical to 100 other reviews?)- Linguistic markers (pronoun usage, word choice patterns)Output: Risk score 0-100 + confidence intervals. Stage 3: Network & Behavioral Analysis (seconds)Graph-based fraud detection. Platforms build a knowledge graph of users, devices, IPs, and businesses. If 50 new accounts all review the same restaurant from the same IP range, the graph screams "RING REVIEWER."Output: Risk score 0-100. Stage 4: Ensemble Decision (milliseconds)Weighted average. FINAL_RISK = 0.25×Stage1 + 0.40×Stage2 + 0.35×Stage3Decision: < 25 = PUBLISH, 25-60 = PUBLISH + MONITOR, 60-80 = HOLD FOR REVIEW, > 80 = AUTO-FLAG Why Genuine Reviews Get Flagged (False Positives) You create an account to review a restaurant. Your review is detailed and genuine. But you're flagged: account age = 4 days (+15 points), no purchase history (+10 points) = 33-45 risk score = held for manual review. False positive. System errs on side of caution. Industry stats: ~2-5% of published reviews are fake. Of flagged reviews, ~15-25% are false positives. Manual accuracy: ~85-95%.SECTION 3: WHAT MAKES A REVIEW STATISTICALLY TRUSTWORTHY

The Trust Scoring Framework Here's a real model (simplified version of what platforms use): Signal | Weight | Example (Low Score) | Example (High Score)Account Age | 25% | 7 days = 0.01 | 2 years = 1.00Review Count | 25% | 1 review = 0.0 | 100+ reviews = 0.83Category Diversity | 25% | All reviews: restaurants = 0.0 | Reviews across 10+ categories = 1.00Rating Distribution | 25% | All 5-stars = 0.3 (suspicious) | Mix of 2-5 stars = 1.00 (healthy) Interpretation: 80-100 trust score = published immediately, given weight in rankings. 60-79 = trusted. 40-59 = moderate. 20-39 = low trust. 0-19 = held for review. Suspicious Profile Patterns 🚩 High-Risk Indicators• Account < 14 days old• First review posted within 2 hours of account creation• 100+ reviews in 48 hours• All reviews same rating (all 5-stars OR all 1-stars)• Semantic similarity > 0.90 to other reviews (copy-paste detected)• Phone numbers or external links in review text• Reviews from same IP as business ownerSECTION 4: HOW TO WRITE REVIEWS THAT PASS MODERATION (ETHICALLY)

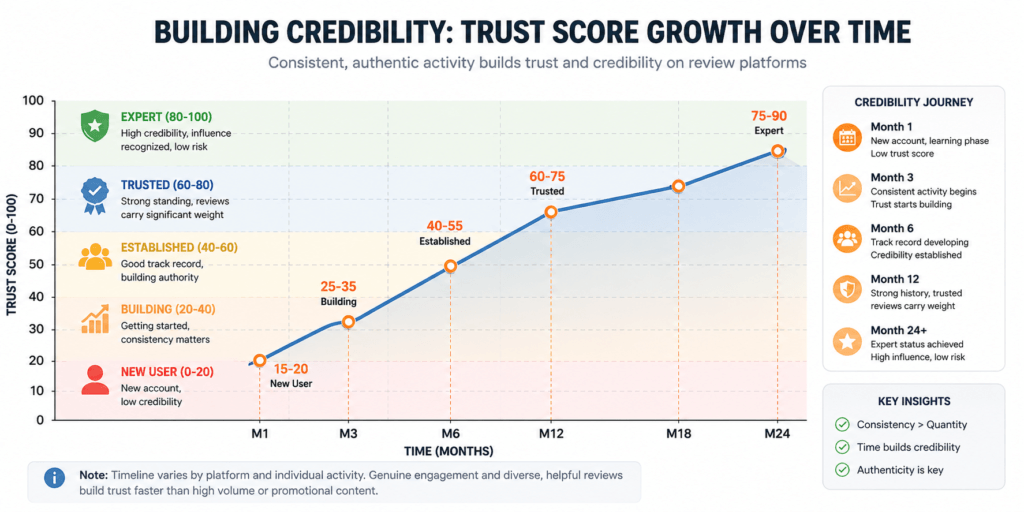

You don't need to game the system. Write honest, detailed reviews. Here's the framework: Step 1: Be Specific"Using 6 months in my home office" signals authenticity. "Amazing!" is vague and triggering. Step 2: Include Real DetailsDates, product models, staff names, transaction context. Hard to fabricate at scale. Step 3: Balance Pros & Cons"Great product, but battery life inconsistent" = genuine. "ABSOLUTELY PERFECT!!!" = suspicious. Step 4: Match Rating to Text5-star rating + "Worst experience ever" = red flag. Align them. Step 5: Use Your VoiceWrite naturally, not like marketing copy. Use contractions. Vary sentence length. Step 6: Avoid Red FlagsNo external links, competitor attacks, document images, or contact requests. Step 7: Aim for 150-400 WordsToo short = low-effort. Too long = rambling. Middle ground is ideal. Building Credibility Over Time The Long-Term Strategy: Months 1-6: Complete your profile. Post 1-3 reviews per month. Diversify (don't just review restaurants). Mix ratings (not all 5-stars). Months 6-18: Establish consistent activity. Develop expertise in a category (if natural). Get verified purchases. Months 18+: Maintain consistency. Respond professionally to challenges. By month 24, you're a "trusted contributor" with high review visibility. The trust score grows predictably: Month 1: 15-20 (new user)Month 3: 25-35 (building)Month 6: 40-55 (established)Month 12: 60-75 (trusted)Month 24: 75-90 (expert)SECTION 5: COMMON RED FLAGS PLATFORMS MONITOR

Account-Level Red Flags • Disposable email: Account created with brand-new Gmail (created same day as review)• No profile data: No photo, bio, or linked social accounts• Zero diversity: Account only reviews one business or category• Mechanical intervals: Reviews posted at exactly 347-second intervals (suggests automation) Content-Level Red Flags • Copy-paste: Semantic similarity > 0.85 to another review• Boilerplate: "Highly recommend, great service!" appears in 50+ reviews from different accounts• Keyword stuffing: Business name repeated 20+ times• Sentiment mismatch: 5-star rating + "Terrible experience, never again"• External links: URLs, phone numbers, email addresses in review text Network-Level Red Flags • Ring reviewers: 50 accounts created same day, all review same restaurant from same IP range• Competitor sabotage: Account A reviews Business X (5-stars), Business X's competitors (1-stars)• Impossible travel: Review from London, next review 2 hours later from Hong Kong (flight time: 12+ hours)• Same IP as business: Review from the business's office/home networkSECTION 6: BUILDING SUSTAINABLE REPUTATION FOR YOUR BUSINESS

If you're managing a business's reputation, here's what platforms reward: What Platforms Want to See ✓ High-Trust Review Profiles• Reviews from diverse, long-standing accounts• Mix of ratings (4-5 stars on average, with some 3-stars)• Specific, detailed feedback• Verified purchases• Natural posting frequency (not artificial spikes) What to Absolutely Avoid ⛔ Never Do This• Hire review factories (obvious fraud, platforms will catch you)• Post fake reviews from multiple accounts• Offer customers incentives for positive reviews (platforms detect this)• Ask employees to post reviews from work networks (same IP = flags)• Attack competitors in reviews (creates noise, doesn't help you)• Post identical reviews across platforms (plagiarism detection catches this) Legitimate Strategy to Earn Trust Just ask for reviews from real customers. If you have 100 customers per week, and 5% leave reviews, you get 5 reviews/week—a sustainable signal. Over 6 months, you accumulate 130 reviews from diverse, natural sources. That's unbeatable. Respond professionally to criticism. When someone leaves a 2-3 star review, respond calmly, offer to resolve the issue. Platforms reward businesses that engage honestly. This creates a narrative: "This business actually cares about feedback." Don't panic about negative reviews. A business with all 5-stars is suspect. A business with 4.2-star average and detailed mixed reviews is trustworthy.KEY TAKEAWAYS

✅ Review authentication is multi-dimensional. Platforms don’t rely on a single signal. They use identity, behavior, content, and network analysis working together.

✅ False positives exist, but are acceptable. Systems err toward caution: better to hold a genuine review for 24 hours than publish a fake one immediately.

✅ Authenticity wins. The easiest way to pass moderation is to write honestly. Specific details, balanced sentiment, and original voice are the signals platforms trust most.

✅ Building credibility takes time. A 2-year-old account with 50 reviews is 100x more trusted than a brand-new account. But that credibility compounds—invest early.

✅ Organic growth beats shortcuts. For businesses, asking real customers for reviews (without incentives) builds trust faster than any manipulation tactic. Platforms reward this.

✅ False positives exist, but are acceptable. Systems err toward caution: better to hold a genuine review for 24 hours than publish a fake one immediately.

✅ Authenticity wins. The easiest way to pass moderation is to write honestly. Specific details, balanced sentiment, and original voice are the signals platforms trust most.

✅ Building credibility takes time. A 2-year-old account with 50 reviews is 100x more trusted than a brand-new account. But that credibility compounds—invest early.

✅ Organic growth beats shortcuts. For businesses, asking real customers for reviews (without incentives) builds trust faster than any manipulation tactic. Platforms reward this.

THE BOTTOM LINE

Trust systems are built to solve a real problem: fake reviews destroy value. But they’re not perfect. Genuine reviews sometimes get held. But over time, authenticity surfaces. The platforms have every incentive to get this right—their business depends on it.

For consumers: Trust reviewers with long histories, diverse ratings, and specific details.

For reviewers: Write honestly. The system rewards it.

For businesses: Focus on delivering great experiences. Ask happy customers to share feedback. The rest follows naturally.

For consumers: Trust reviewers with long histories, diverse ratings, and specific details.

For reviewers: Write honestly. The system rewards it.

For businesses: Focus on delivering great experiences. Ask happy customers to share feedback. The rest follows naturally.